If you’ve been reading the news, you’ve almost certainly seen something that involved Machine Learning. You might not have recognized the phrase, but it was there. Trust me. In fact, you’re already affected by Machine Learning whether you realize it or not. When you hear about automated decision making algorithms, they are often Machine Learning. Self-driving cars? Siri, Cortana, Google Assistant? Yep - all powered somewhere, somehow by Machine Learning.

At it’s core, ML (Machine Learning) is pretty simple. It’s mainly about algorithms that learn from the data we have and then make predictions about data we haven’t seen yet. There’s theory: what can we say with mathematical certainty about what our algorithms can do, how much data do we need, how right or wrong will we be? There’s also practice: predicted earthquakes, create computers to beat humans at board games, and driving cars.

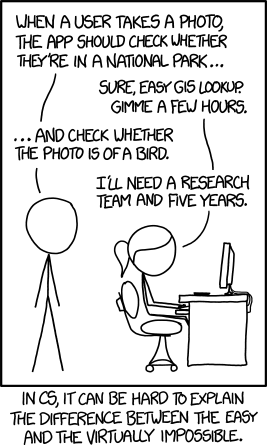

You might have heard of AI (Artificial Intelligence): the field dedicated to making computers do smart things (or act as silly as humans if you’re talking about Artificial General Intelligence). ML is a subset of AI. One way to think about them is that AI covers all manner of intelligence, while ML is about training programs that are almost as smart as a dog. Of course, it’s not always that easy: some ML programs are better than the humans they compete against, and some are dumber than bumblebees.

However, you don’t need a robot as smart as you to get lots of interesting work done. Imagine if your web browser was smart enough to hide the horrible comments you might see on a blog with a “High Jerk Probability” warning. You could override the warning and read the comment, but by and large you wouldn’t and large chunks of your soul would be spared the searing fire of Internet commenters’ rage.

In fact, the future of ML might be creating smart devices and programs that we learn to use. Humans are pretty adaptable and it’s no stretch to imagine that we would learn to work around the deficiencies of intelligent helpers. We already work with plenty of robots that manage parts of our lives for us: dish washers, clothes washers, heating and air conditioning, the cruise control on your car, and on and on. The oldest and cheapest of these has at least some “intelligence” baked in, even if it’s a timer that shuts the appliance off.

ML is about training programs that are almost as smart as a dog

One example is algorithmic diagnosis. A fancy AI robot doctor could talk to you about your symptoms and then give you your diagnosis. Unfortunately, it’s hard to get humans to list all their relevant symptoms, so we need a human for that. It also turns out that the intuition and diagnostic skills of experienced doctors is pretty hard to match. Pattern matching and learning over time is something we humans excel at.

What’s left for our poor AI robot? Well, not much - but there’s plenty for a humble ML diagnosing program. Doctors could take the patient history and record all the relevant symptoms. Experienced physicians could use the ML diagnosis to double check their own. If the ML diagnosis turns out to be wrong, that missed diagnosis helps the program make a better prediction next time.

Which is great, but it kind of sounds like we’re getting doctors to train ML devices for no reason, doesn’t it? However, you need to remember where experienced, trained physicians come from. We hatch them from novice medical students. While a doctor with twenty years of experience might not use ML diagnostics much, we can imagine a teaching hospital that requires medical students to record what an ML program told them - and why they decided to ignore it.

But wait, there’s more. Doctors frequently consult specialists. Medicine is difficult and ever-changing, so specialty consultations are often necessary to get someone with the right experience in front of the patient. It wouldn’t be as good, but what if your family physician could run your symptoms through the complete battery of ML specialty programs? Your swollen left ear and orange eyes might be curable after all!

Recently at the 2016 International Symposium of Biomedical Imaging in Prague, there was a cancer detection contest. The best ML-based diagnostic program (based on something called Deep Learning) did pretty darn well. However, the top human team in the competition did even better. At first blush, it looks like humans win again. Except that when humans worked together with the ML program, their combined accuracy was 85 percent better than the humans alone. This may well be the future of diagnostic medicine: ML technology assisting and augmenting highly trained physicians. You can read all about the details in the paper published here. If you don’t want to read the paper, there was also a Yahoo news article.

Which brings us to an important point. Like a lot of technology and science, that cancer news story at Yahoo uses some numbers (“92 percent accuracy” and so on) that are not true. The results were not judged with accuracy (“what percentage of my guess were correct”). They were judged with “AUC” or “Area Under the Curve”, or more accurately “Area of Under the Receiver Operating Characteristic Curve Score”. So what in the world is AUC? Let’s not get in to that right now - we’ll say that it’s a score that looks like accuracy, but it takes into account both how right you are (the “true positive rate”) and how wrong you are (the “false positive rate”). 1

Why does accuracy versus AUC matter? Well, it matters a lot if you’re working in Machine Learning. But if you’re an interested layperson reading about a cancer detection contest, should you care? Yes, you should. Math, science, computers, and, yes, machine learning is becoming more and more important to society. Demand accuracy from journalists when it comes to science. Especially when it comes to science!

Stepping off the soapbox now.

But before we conclude, there’s one term above that gets a LOT of press that you should probably know. Deep learning is all the rage now. You can a good introduction over at DeepLearning.net if you’re interested in the technical nitty gritty. When we’re talking about machine learning or AI or algorithmic decision making, “deep learning” does not refer to any theory from educational psychology or some kind of inherently better learning. The “deep” in Deep Learning refers to artificial neural networks. The idea behind neural nets were developed as early as the 1940s, fell out of favor in the late 1960s, and briefly came back into vogue in the 1980s.

Demand accuracy from journalists when it comes to science. Especially when it comes to science!

After the 1980s, a few researchers continued work on neural networks. They made a few improvements and breakthroughs. Eventually they made enough progress, and computers got fast enough. The result was a slew of victories for neural networks. There are different architectures used by different researchers for different problems, but they generally share one trait: lots of layers. The “advanced” neural nets of the 80s usually had 3 layers, sometimes 2 layers, and occasionally 4 layers if you had fancy computers and lots of time on your hands. Today’s “deep” networks might have a dozen layers doing different things. Using “deep neural networks” to do machine learning became known as “deep learning”.

If you’ve heard about Alpha Go, that uses deep learning. Facebook, Google, Microsoft, Baidu, and lots of other high tech companies are using deep learning for all kinds of things, including image recognition, language translation, and voice recognition. The current crop of self-driving cars all use some form of deep learning.

Which brings us to the end of our little introduction to Machine Learning. There’s plenty to cover in the future. If you’ve heard terms like regression, clustering, supervised learning, and unsupervised learning, those are all under the machine learning umbrella. Stay tuned for more!

References

Links used in this article (or that you might want to check out):

- Artificial Neural Networks: https://en.wikipedia.org/wiki/Artificial_neural_network

- Backpropagation: https://en.wikipedia.org/wiki/Backpropagation

- Deep Learning: https://en.wikipedia.org/wiki/Deep_learning

- DeepLearning.net: http://deeplearning.net/

- Detecting Cancer News Story: https://www.yahoo.com/news/ai-boosts-cancer-screens-nearly-100-percent-accuracy-161645260.html

- Detecting Cancer Paper: http://arxiv.org/abs/1606.05718

- Donald Hebb: https://en.wikipedia.org/wiki/Donald_O._Hebb

- Machine Learning: https://en.wikipedia.org/wiki/Machine_learning

- Perceptrons: https://en.wikipedia.org/wiki/Perceptrons_(book)